Why ChatGPT Isn’t Your Therapist: A Hopeful Vision for AI & Mental Health

Written by

Melissa Andrada, MSc (mel - they/them)

CEO & CoFounder

Dr. Suzan Ahmed, Ph.D. (she/her)

Clinical Psychologist & Executive Coach

How can we embody a hopeful vision for

the future of our minds and bodies within the workplace?

Photo by Jesse Whiles

Last summer in London, we brought together People Leaders to reflect on how we can better support employees’ mental health, particularly as artificial intelligence becomes a more present part of our daily lives. We discussed the general strengths and limitations of AI, from concerns about privacy and data security, to the usefulness of AI for administrative tasks, to overreliance on AI for mental health support.

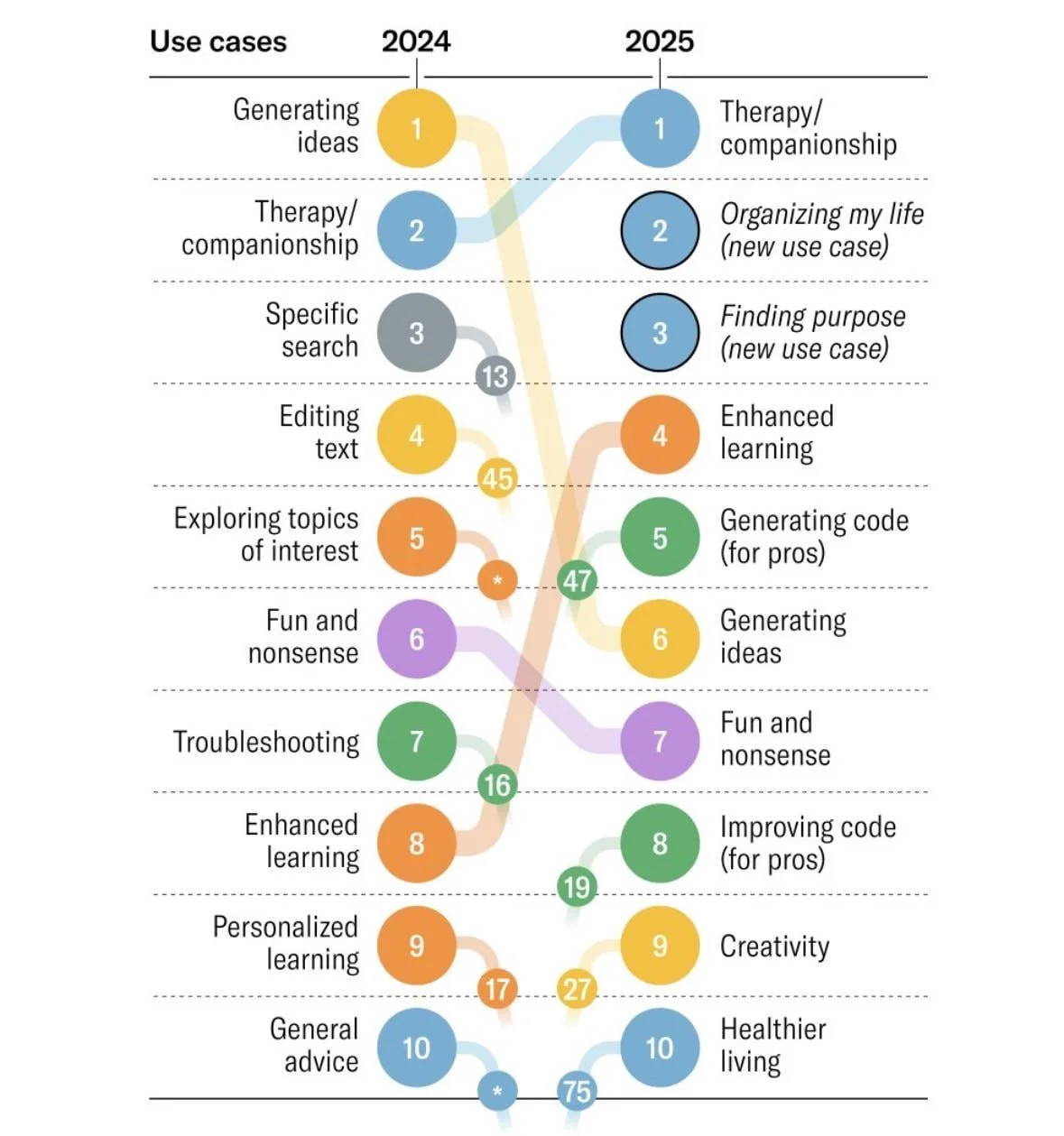

In 2024, the number #1 use case of AI was generating ideas. In 2025, the number #1 use case was therapy and companionship. Given the loneliness epidemic, which impacts 1 in 4 people globally, it’s not surprising people are turning to ChatGPT and other AI tools for empathy and therapeutic support. As clinical researchers and practitioners, we may be tempted to offer a blanket rejection of AI, claiming it is detrimental to the mental health and wellbeing of our people. However, this isn’t the full story; the reality is more nuanced.

Part of what makes us human is a deep capacity to see nuance and write in realities that do not yet exist. In this essay, we offer a heartfelt strategic analysis of artificial intelligence in the context of mental health, rooted in the latest research in neuroscience, somatics, and clinical psychology, alongside our lived experiences as survivors of trauma and oppression. Inspired by the lineage of Narrative Therapy, our invitation is to re-author a collective story of artificial intelligence that reduces human suffering and brings deeper connection – within the workplace and beyond.

Part 1. Why People Use ChatGPT: How AI Supports Our Mental Health

Part 2. How AI Can Harm: The Risks of AI for Mental Health

Part 3. Why AI Isn’t Enough: The Psychology of Human Healing

Part 4. Four Strategies for Supporting Yourself and Your Team Around AI & Mental Health

Part 5. Conclusion: Human-like Doesn’t Mean Human

1. Why People Use ChatGPT: How AI Supports Our Mental Health

Photo by Spencer Ian Harris

Before we dive into why AI is not the answer to our mental health problems, it is worth exploring why artificial intelligence has become a pervasive phenomenon in our human reality.

Immediate Validation

One of the most apparent benefits of seeking ChatGPT's support is the immediate sense of validation it provides. Like face-to-face therapists, AI chatbots provide quick responses that affirm our current problem. This positivity or confirmation bias, combined with speed, creates a reinforcing experience that encourages repeated use. In an instant, we can feel heard, listened to, and seen, even if not physically.

Additionally, for some, turning to ChatGPT can serve as a healthier alternative to coping strategies like drinking, smoking, or other addictions.

Anonymity

Similar to crisis support lines, anonymity is another reason many find AI appealing for support. Chatbots remove the vulnerability of sharing openly with another person and can bypass barriers in human therapy, such as identity-related biases. This important feature can also greatly reduce or eliminate the resistance some folks may experience when considering therapeutic support with a person. For some, this can be the difference between receiving mental health care and navigating challenges on their own.

Affordability

Another benefit of AI is its high accessibility and affordability. The average cost of therapy in the United States ranges from $100 to $200 a session, or €100 to €150 in Europe. Additionally, according to new data from the World Health Organization (WHO), over 1 billion people are living with mental health conditions, yet most do not receive adequate care. This global crisis calls for immediate intervention through accessible, affordable healthcare.

The immediacy, accessibility, and anonymity of generative AI contribute to increased rates of its use among youth for mental health support, including 22 percent of young adults aged 18 to 21. This suggests that AI is perceived as a valuable form of support, especially among young adults, who may otherwise avoid or be unable to afford costly therapy sessions.

Efficiency

For therapists, artificial intelligence can be a valuable tool in managing client care. Many clinicians experience high levels of burnout and compassion fatigue, and AI can help reduce the administrative burden of record keeping and documentation. It can also support clinicians in generating hypotheses and making efficient decisions, especially in complex cases.

Yet as we consider these advantages, we must also ask ourselves: can AI truly meet the deeper needs it seems to address?

2. How AI Can Harm: The Risks of AI for Mental Health

While AI offers a quick, accessible alternative to traditional therapy or crisis support, research shows that relying too heavily on AI for mental health—especially in serious cases—can have harmful and sometimes dangerous consequences.

Photo by Aidin Geranrekab, Unsplash

Risk of Safety and Suicidality

Since the rise of AI and ChatGPT, numerous cases involving safety, suicidality, and other serious mental health conditions have been reported.

Cases of people being convinced or assisted by AI to end their lives, or turning to ChatGPT to help relieve the administrative burdens of work, quickly spiraling into delusional psychosis, are among them. Dr. Joseph Pierre, a psychiatrist specializing in psychosis at the University of California, San Francisco, has commented, “There’s something about these [chatbots/LLMs] — it has this sort of mythology that they’re reliable and better than talking to people. And I think that’s where part of the danger is: how much faith we put into these machines.”

Social Isolation

Even if such cases of suicidality and harm are rare, research shows that excessive reliance on AI can undermine our mental and emotional wellbeing. Relying on AI instead of human connection can contribute to social isolation and depression.

Human-Like Doesn’t Mean Human

One appealing aspect of ChatGPT is its ability to provide human-like responses, whether or not they are factually accurate. This provides the feeling of being in a human interaction, without requiring vulnerability. A short-term “solution” to discomfort, but one that comes at the cost of not actually learning how to sit with vulnerability – an important aspect of social relationships.

Misinformation about Mental Health

Other critiques of AI include the potential to perpetuate misinformation and blur the lines between high- and low-quality content. Because ChatGPT lacks real-time fact-checking, erroneous or misleading information is common. The implications of this in a world already affected by disinformation are alarming.

Outsourced Agency

In addition to increasing the risk of social isolation and misinformation, emerging research suggests that dependence on AI can outsource our sense of agency and hinder our ability to self-soothe, think critically, and develop neurological resilience in the face of life’s stressful experiences. Perhaps most critical to consider, however, is how relying on AI can become a slippery slope toward disconnecting from one’s sense of embodied belonging and relating with others; these are some of the qualities that make us, well, human.

AI dependence has been associated with concerns over loss of human connection, highlighting the importance of in-person, community engagement

Photo by: Gillian Stargensky

Disconnection

Research from McBain and colleagues has shown an increase in AI usage for mental health support among adolescents and young adults in the U.S., likely due to the low cost, privacy, and immediacy of AI-based support. Although it is positive for young people to advocate for their mental and emotional wellbeing, it is important to consider the impact of AI on brains that are still maturing.

Additionally, Black youths participating in the same study reported lower perceived helpfulness than other respondents, highlighting gaps in cultural sensitivity and the limitations of AI in accounting for important lived experiences and identities.

Increased reliance on AI has also been associated with reduced perceived emotional support and increased concerns about the loss of human connection among higher education students. This suggests that while there may be an immediate sense of support in using AI, the potential for its dehumanization of our social connections is felt and can grow over time.

This invites the question of how we can humanize artificial intelligence to facilitate social connection and agency.

3. Why AI Isn’t Enough: The Psychology of Human Healing

As mental health facilitators and clinicians, our lived experiences affirm what psychotherapy research has consistently demonstrated: the quality of the therapist-client relationship contributes more significantly to healing outcomes in therapy than the modality or type of therapy itself. Research on psychotherapy outcomes suggests that approximately 15% of treatment effects are attributable to specific therapeutic techniques, whereas common factors—particularly the therapeutic alliance—account for roughly 30% of the variance in outcomes.

To be fair, ChatGPT can offer some basic therapy skills, even decently following the OARS model, a framework created by psychologists William R. Miller and Stephen Rollnick, pioneers of Motivational Interviewing. This model suggests that effective therapy includes a therapist’s ability to ask open-ended questions, provide affirmations, accurately reflect a client’s experiences, and summarize what is shared in a comprehensive yet concise manner. While ChatGPT has been shown to respond to “clients” with these important skills, the fact remains that a relationship between therapist and client in an AI context lacks the embodied, felt experience of a human who is present, conscious, and witnessing a client’s healing process.

Photo by Gillian Stargensky

A 2019 meta-analysis by Norcross and Wampold highlighted the factors that seem to characterize “good” therapy, regardless of therapeutic model. These factors include empathy, therapist authenticity, and goal consensus. Therapist Jason N. Lynder, PsyD, also emphasizes the essential skill of attunement, or the “therapist’s capacity to deeply sense and respond to the emotional state of the client. Examples of this include noticing non-verbal responses in the client, such as tears or pauses in the conversation, as well as paying attention to what might be beneath what the client is actually saying. As Lynder explains, “It’s about feeling with someone rather than simply doing (therapy) to them.”

“Therapy is more than thinking and words.

Therapy is far more than speaking and listening.

Therapy is being present, tuning into energy

slowing down the heart rate and breathing together

giving each story, each word, each gesture, each movement

a caring pause and attention.”

This distinction between feeling with and doing therapy is critical, as it highlights what may be missing from AI-generated therapy sessions, which are increasingly used for mental health support. Lynder points out, “Clients usually don’t remember therapists for their theoretical brilliance. They remember how it felt to be with their therapists. Were we [therapists] safe? Were we real? Did we see them clearly?”

If you’re experiencing fear or dysregulation at work, literally feeling the hand of someone you trust on your back is a faster way to calm the nervous system, more than speaking or seeking support from AI alone.

Photo by Marianna Silvano

“Holding space means to be with someone without judgment. To donate your ears and heart without wanting anything back. To practice empathy and compassion. To accept someone’s truth, no matter what they are. To allow and accept. Embrace with two hands instead of pointing with one finger. To come in neutral. Open. For them. Not you. Holding space means to put your needs and opinions aside and allow someone to just be.”

Therapy means tuning into your inner world and nervous system to support your clients' nervous systems. It begins and ends with the holistic body. The health of our nervous system is one of the most important factors in our wellbeing and our capacity to function well at work. In fact, studies estimate that over 87% of our nervous system activity is below the surface of our “thoughts.”

Psychotherapist Resmaa Menakem, author of My Grandmother's Hands: Racialized Trauma and the Pathway to Mending Our Hearts and Bodies, shared, “Recent studies and discoveries increasingly point out that we heal primarily in and through the body, not just through the rational brain. We can all create more room and more opportunities for growth in our nervous systems. But we do this primarily through what our bodies experience and do—not through what we think or realize or cognitively figure out.”

According to Dr. Elsie Jones-Smith, being in the company of someone we feel safe with literally changes our brain chemistry. Neurological mirroring occurs when interpersonal reactions are experienced as being nonjudgmental and respectful. This occurs when our eyes are met with a caring gaze, our words of shame and distress are honored with intentional pause and listening, our breath deepens together, and our hearts beat in sync with a shared rhythm.

Co-regulation occurs when two people’s nervous systems influence each other’s physiological and psychological state, helping to promote emotional balance.

Image by Beatriz Camaleão, Unsplash

These simple yet powerful somatic interventions activate the parasympathetic nervous system, which is responsible for sleep and rest. This enables us to reconnect with our prefrontal cortex and executive functioning more effectively, so we show up with greater clarity for grounded decision-making and collaboration. When our nervous systems are under high pressure and stress, as is so often the case at work, we can create an embodied shortcut to a calmer collective nervous system through co-regulation.

What research on polyvagal theory and the nervous system shows is that healing stress and trauma requires an embodied approach. In many cases, talking (or typing) is not enough when it comes to managing stress and trauma responses. Instead, a bottom-up approach – starting with the physical body – is necessary. Rather than a top-down approach of processing with the mind first, navigating emotional experiences and sustaining deep healing over time requires us to be consistently present in our bodies, in relation to others’ bodies.

4. Four Strategies for Supporting Yourself and Your Team Around AI & Mental Health in the Workplace

Photo by Jesse Whiles

Educate your team on how we can use AI with integrity for mental health and resilience. Host a roundtable lunch to discuss how people are using to support their mental health and work.

You can invite discussion around these prompts:

How has artificial intelligence created improvement in your lives and at work? How has it supported your mental health, wellbeing and productivity?

What are your concerns around using artificial intelligence? What are its limitations?

How can artificial intelligence be integrated into our professional and personal lives with integrity? How can we facilitate more meaningful human connection and belonging at work?

Come with openness, curiosity, and affirmation as a manager and leader. It will often only create more division and isolation to judge, shame, or blame people for using artificial intelligence for mental health, when they feel they have no one to turn to for support.

In your 1:1 conversations, you can explore language like (adjusting to the person and context): “I’m glad you want to take steps to support your mental health. I’m wondering if you’d like additional support. I’d be happy to help you find resources, a therapist, or a coach.”

Honor differences in the lived experiences of your team members. Consider how culture and identity may impact how individuals perceive AI, whether or not it is supportive to them, and why. Seek to understand and hold space for varying perspectives.

Empower self-awareness and agency for your team’s mental health and wellbeing, not relying on AI as the main authority for mental health challenges or interpersonal conversations and conflicts.

Provide psychoeducation on the role and modalities of therapeutic and clinical support, particularly grounded in the latest research in neuroscience, somatics, and clinical psychology.

Evaluate employee benefits to ensure people can easily access the mental support they need. Create a list of therapists, coaches, and practitioners. Here’s a compilation of therapy directories based in the States where you may find someone specific to your needs:

Practice compassion and empathy for the (often) difficult process of finding a therapist or coach who feels aligned with one’s values and emotional needs.

Use AI to facilitate meaningful in-person connections and events – as a team and outside people’s personal lives. Examples include:

Strava -> in-person connection through running, hiking & sports

Resident Advisor -> in-person connection through music

Couchsurfing -> in-person connection through travel

Eventbrite -> in-person connection through leadership & identity

Meetup -> in-person connection through shared hobbies & interests

The Breakfast -> in-person connection over breakfast

Credit: Farsai Chaikulnga, Unsplash

Conclusion: Human-like doesn’t mean human.

AI has the potential to support, but not replace, what makes us human.

Whether we like it or not, AI is here to stay. While it brings challenges—including environmental impact, data security concerns, and the risk of harmful psychological effects when overused—it also has the capacity to facilitate a deeper understanding of ourselves and to create important in-person connections, when used thoughtfully. With or without AI, our goal should be to empower ourselves and our teams to care for mental health and wellbeing with greater skill and intention.

We can’t lose the parts of us that make us human – and lose sight of the nervous system. Because so much of the psychological wounding that shows up in the workplace is relational, we need to care for our mental health and resilience in person and in community. Ultimately, AI has to lead to embodied in-person connection and co-regulation to have a sustaining impact on our everyday wellbeing.

Reflecting on the most recent WebSummit in Lisbon, Portugal, one statement by CEO Paddy Cosgrave continues to ring true: “AI can’t replace self-awareness.” Acknowledging this truth, we can move towards understanding the boundaries between where AI contributes to our collective evolution and inclusive health and wellbeing, and where it doesn’t.

It is urgent and necessary to consider the ethical and moral issues surrounding AI use and its impact on our current and future reality. Collectively, we have a responsibility to further our understanding of AI and its impact, which demands rigorous, longitudinal research and widespread education about its risks and benefits.

Photo by: Cash Macanaya, Unsplash

In a case study of ChatGPT as it concerns public safety, Guo and colleagues (2023) reason, “Although no conclusive evidence suggesting AI will surpass human control exists, AI has the potential for such an outcome.” Michael Kosinski, a professor of computational psychology at Stanford University, has proposed that LLMs like ChatGPT could spontaneously develop their own “theory of mind,” including self-awareness and morality. This raises the question: Do we want to fully give up our agency to AI before we no longer have a choice?

It is important for us to move beyond the binary argument of AI as “good” versus “bad” and instead, towards a more nuanced discussion of how we can work more skillfully and mindfully with it. We can learn to use AI with intention rather than as a tool to bypass the development of the necessary skills for emotional regulation and human connection. Educating ourselves on the limitations and risks of AI, while promoting healing in community with other humans, must be the path forward if we want to protect the core qualities of living a human life: embodied presence, attunement, and connection.

References & Recommended Reading

Al-Zahrani, A. M. (2025).

Exploring the impact of artificial intelligence chatbots on human connection and emotional support among higher education students. SAGE Open.

https://doi.org/10.1177/21582440251340615

Birtchnell, J. (1984).

Dependence and its relationship to depression. British Journal of Medical Psychology, 57, 215–225.

https://doi.org/10.1111/j.2044-8341.1984.tb02581.x

Ge, L., Yap, C. W., Ong, R., & Heng, B. H. (2017).

Social isolation, loneliness and their relationships with depressive symptoms: A population-based study. PLOS ONE, 12(8), e0182145.

https://doi.org/10.1371/journal.pone.0182145

Gerlich, M. (2025).

AI tools in society: Impacts on cognitive offloading and the future of critical thinking. Societies, 15(1), Article 6.

https://doi.org/10.3390/soc15010006

Grabbe, L., Higgins, M. K., & Baird, M. (2017).

The trauma resiliency model: A “bottom-up” intervention for trauma. Journal of the American Psychiatric Nurses Association, 23(1), 76–84.

https://doi.org/10.1177/1078390316653265

Guo, D., Chen, H., Wu, R., & Wang, Y. (2023).

AIGC challenges and opportunities related to public safety: A case study of ChatGPT. Journal of Safety Science and Resilience, 4(4), 329–339.

https://doi.org/10.1016/j.jnlssr.2023.08.001

Keshavan, M., Torous, J., & Yassin, W. (2026).

Do generative AI chatbots increase psychosis risk? World Psychiatry, 25(1), 150–151.

https://doi.org/10.1002/wps.70017

Lambert, M. J., & Barley, D. E. (2001).

Research summary on the therapeutic relationship and psychotherapy outcome. Psychotherapy: Theory, Research, Practice, Training, 38(4), 357–361.

https://doi.org/10.1037/0033-3204.38.4.357

McBain, R. K., Bozick, R., Diliberti, M., Zhang, L. A., Zhang, F., Burnett, A., Kofner, A., Rader, B., Breslau, J., Stein, B. D., Mehrotra, A., Uscher-Pines, L., Cantor, J., & Yu, H. (2025).

Use of generative AI for mental health advice among US adolescents and young adults.J AMA Network Open, 8(11), e2542281.

https://doi.org/10.1001/jamanetworkopen.2025.42281

Menakem, R. (2017).

My Grandmother’s Hands: Racialized Trauma and the Pathway to Mending Our Hearts and Bodies. Central Recovery Press.

Norcross, J. C., & Wampold, B. E. (2019).

A new therapy for each patient: Evidence-based relationships and responsiveness. Journal of Clinical Psychology, 75(11), 1932–1940.

https://pubmed.ncbi.nlm.nih.gov/30334258/

Poli, A., et al. (2021).

A systematic review of a polyvagal perspective on embodied interventions. Frontiers in Psychology, 12, 745037.

https://doi.org/10.3389/fpsyg.2021.745037

Singh, O. P. (2023).

Artificial intelligence in the era of ChatGPT: Opportunities and challenges in mental health care. Indian Journal of Psychiatry, 65(3), 297–298.

https://doi.org/10.4103/indianjpsychiatry.indianjpsychiatry_112_23

Sood, M. S., & Gupta, A. (2025).

The impact of artificial intelligence on emotional, spiritual and mental wellbeing: Enhancing or diminishing quality of life. American Journal of Psychiatric Rehabilitation, 28, 298–312.

https://doi.org/10.69980/ajpr.v28i1.93

University of New Hampshire Institute on Disability. (n.d.).

Motivational interviewing: The basics (OARS).

https://iod.unh.edu/sites/default/files/media/2021-10/motivational-interviewing-the-basics-oars.pdf

Wampold, B. E., & Imel, Z. E. (2015).

The great psychotherapy debate: The evidence for what makes psychotherapy work (2nd ed.). Routledge.

https://doi.org/10.4324/9780203582015